The effect of non-Gaussian noise on the number of factors selected

Jason Willwerscheid

11/20/2019

Last updated: 2019-11-20

Checks: 6 0

Knit directory: FLASHvestigations/

This reproducible R Markdown analysis was created with workflowr (version 1.2.0). The Report tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20180714) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.DS_Store

Ignored: code/.DS_Store

Ignored: code/flashier_bench/.DS_Store

Ignored: data/flashier_bench/

Ignored: data/metabo3_gwas_mats.RDS

Ignored: output/jean/

Untracked files:

Untracked: code/fasfunction.R

Untracked: code/nnmf.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the R Markdown and HTML files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view them.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 83d15cc | Jason Willwerscheid | 2019-11-20 | workflowr::wflow_publish(“analysis/misspec_k.Rmd”) |

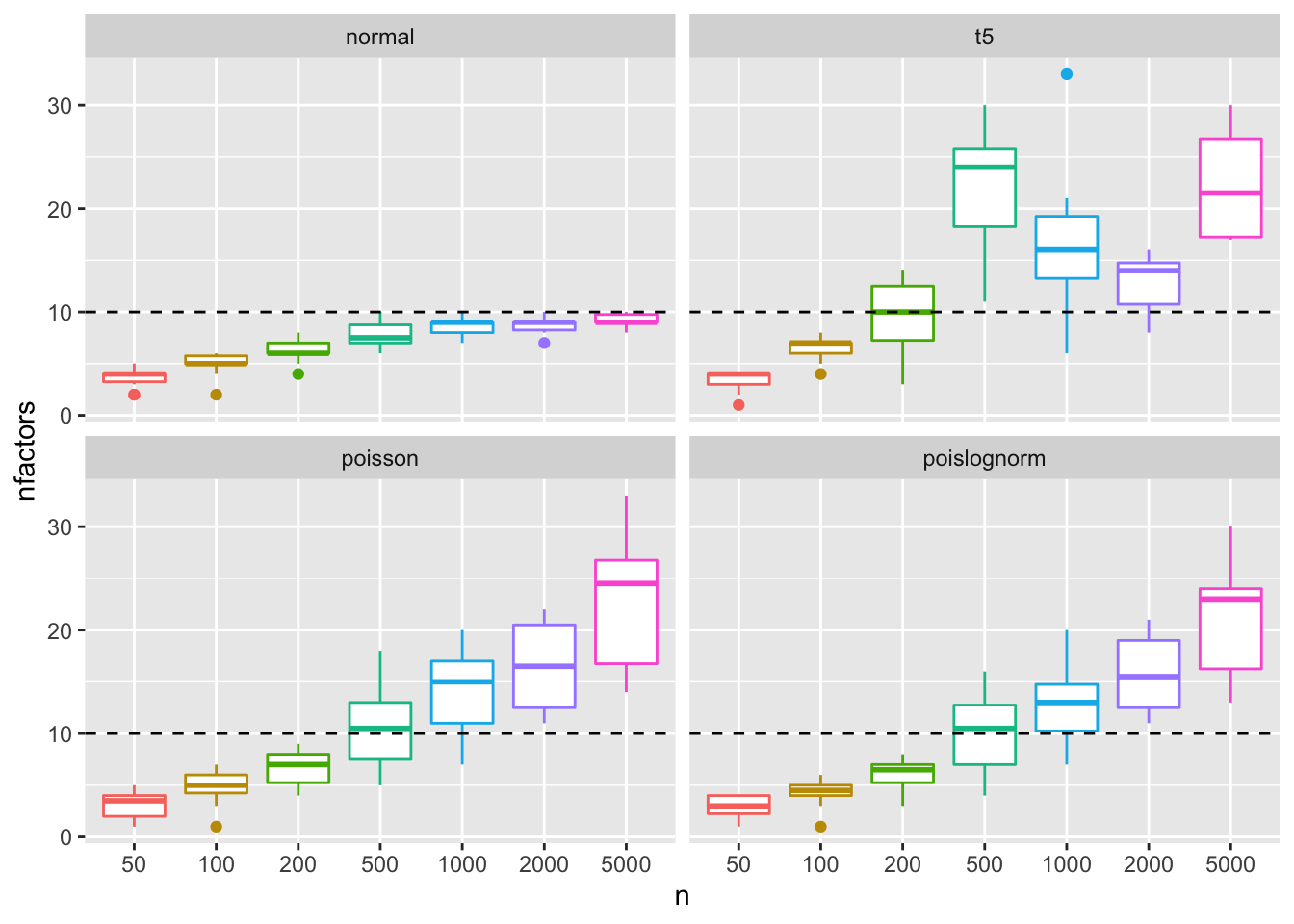

I run a series of simulations in which I simulate from the true EBMF model (with Gaussian noise) and then vary the noise model to see whether EBMF is still able to select the correct number of components.

The EBMF model is: \[ Y = LF' + E, \] where \(Y \in \mathbb{R}^{n \times n}\), \(L \in \mathbb{R}^{n \times k}\), \(F \in \mathbb{R}^{n \times k}\), and \(E \in \mathbb{R}^{n \times n}\). I fix \(k\) at 10, but I vary \(n\) from 50 to 5000.

For each trial and each choice of \(n\), I simulate factors and loadings from the following distributions: \[ \begin{aligned} L_{ik} &\sim \pi_{0\ell}^{(k)} \delta_0 + (1 - \pi_{0\ell}^{(k)}) N(0, \sigma_\ell^{(k)2}) \\ F_{jk} &\sim \pi_{0f}^{(k)} \delta_0 + (1 - \pi_{0f}^{(k)}) N(0, \sigma_f^{(k)2}) \end{aligned} \] \[ \begin{aligned} \pi_{0\ell}^{(k)}, \pi_{0f}^{(k)} &\sim \text{Beta}(0.5, 0.5) \\ \sigma_\ell^{(k)2}, \sigma_f^{(k)2} &\sim \text{Gamma}(1, 2) \end{aligned} \]

From each low-rank matrix \(LF'\), I generate four different data matrices using different error models:

- The Gaussian model (i.e., the EBMF model): \[ Y = LF' + E, \] where \[ E_{ij} \sim N(0, 0.5^2) \]

- A heavier-tailed model: \[ \begin{aligned} Y &= LF' + E \\ E_{ij} &\sim 0.5 * t_5 \end{aligned} \]

- A Poisson model: \[ X = \text{Poisson(exp(LF'))} \] In this case, I fit

flashto the transformed data matrix \[ Y = \log(X + 1) \] - A Poisson-lognormal model: \[ \begin{aligned} X &= \text{Poisson(exp(LF' + E))} \\ E_{ij} &\sim N(0, 0.5^2) \\ Y &= \log(X + 1) \end{aligned} \]

The code used to run the simulations can be viewed here.

Results

As expected, flash selects an approximately correct number of factors when the model is correctly specified (recall that I fix \(k = 10\)) and when there is a sufficient amount of data. Under model misspecification, however, it is unable to do so. The behavior under Poisson and Poisson-lognormal noise is similar: we see the number of factors continue to increase, apparently without bound, as the size of the dataset gets larger. The behavior under \(t\)-noise is more erratic.

library(flashier)

library(ggplot2)

res <- readRDS("./output/misspec_k/misspec_k.rds")

ggplot(res, aes(x = n, y = nfactors, col = n)) +

geom_boxplot() + geom_hline(aes(yintercept = 10), linetype = "dashed") +

facet_wrap(~distn) + theme(legend.position = "none")

sessionInfo()#> R version 3.5.3 (2019-03-11)

#> Platform: x86_64-apple-darwin15.6.0 (64-bit)

#> Running under: macOS Mojave 10.14.6

#>

#> Matrix products: default

#> BLAS: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRblas.0.dylib

#> LAPACK: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRlapack.dylib

#>

#> locale:

#> [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

#>

#> attached base packages:

#> [1] stats graphics grDevices utils datasets methods base

#>

#> other attached packages:

#> [1] ggplot2_3.2.0 flashier_0.2.2

#>

#> loaded via a namespace (and not attached):

#> [1] Rcpp_1.0.1 pillar_1.3.1 compiler_3.5.3

#> [4] git2r_0.25.2 workflowr_1.2.0 iterators_1.0.10

#> [7] tools_3.5.3 digest_0.6.18 tibble_2.1.1

#> [10] evaluate_0.13 gtable_0.3.0 lattice_0.20-38

#> [13] pkgconfig_2.0.2 rlang_0.3.1 Matrix_1.2-15

#> [16] foreach_1.4.4 yaml_2.2.0 parallel_3.5.3

#> [19] ebnm_0.1-24 xfun_0.6 withr_2.1.2

#> [22] dplyr_0.8.0.1 stringr_1.4.0 knitr_1.22

#> [25] fs_1.2.7 tidyselect_0.2.5 rprojroot_1.3-2

#> [28] grid_3.5.3 glue_1.3.1 R6_2.4.0

#> [31] rmarkdown_1.12 mixsqp_0.2-4 purrr_0.3.2

#> [34] ashr_2.2-38 magrittr_1.5 whisker_0.3-2

#> [37] backports_1.1.3 scales_1.0.0 codetools_0.2-16

#> [40] htmltools_0.3.6 MASS_7.3-51.1 assertthat_0.2.1

#> [43] colorspace_1.4-1 labeling_0.3 stringi_1.4.3

#> [46] lazyeval_0.2.2 doParallel_1.0.14 pscl_1.5.2

#> [49] munsell_0.5.0 truncnorm_1.0-8 SQUAREM_2017.10-1

#> [52] crayon_1.3.4